Neural filters: What Photoshop’s powerful new AI tools can do

The release of the new Photoshop neural filters at Adobe MAX in October was a magical moment. Audiences marveled to see how artificial intelligence (AI) could be used to change the facial expression or age of a portrait subject with a single click. But it wasn’t just the excitement of innovation or the beauty of technology that brought the magic — it was also the clear potential of these filters to eliminate tedious workflows and lower barriers to creativity.

While the Smart Portrait neural filter got a lot of the buzz at MAX, it isn’t the only filter making it easier than ever to creatively alter photos. In this article, we’ll explore all eight of Photoshop’s new machine learning-powered filters. The best part is that you can implement these filters in seconds, significantly improving your workflow while expanding your creative options.

What are neural filters?

Neural filters use machine learning technology to literally make Photoshop smarter, enabling it to recognize elements of an image and edit or retouch photos the way a professional editor would. Because they are based on AI that is continually improving, the results will get better and better over time.

Adobe partnered with tech company Nvidia, which developed some of the machine learning libraries used in Photoshop, to create the neural filters, and the two companies are continuing to work together to improve the recently released filters and develop new filters. So far, eight filters are available in the app, including two that are fully functional and six in beta release.

Read on to learn more about the neural filters in Photoshop and how they can improve your workflow and jumpstart your creativity.

Featured neural filters

Skin smoothing

One of the most common requests professional photographers — especially event photographers — get: skin smoothing. Even amateur photographers are often asked by friends and family to remove distractions or smooth out portraits where the lighting wasn’t quite right. The Skin Smoothing filter strikes a balance between maintaining the characteristics of skin, such as pores, that make it look real, and giving you a simple, one-click way to remove blemishes or other distractions.

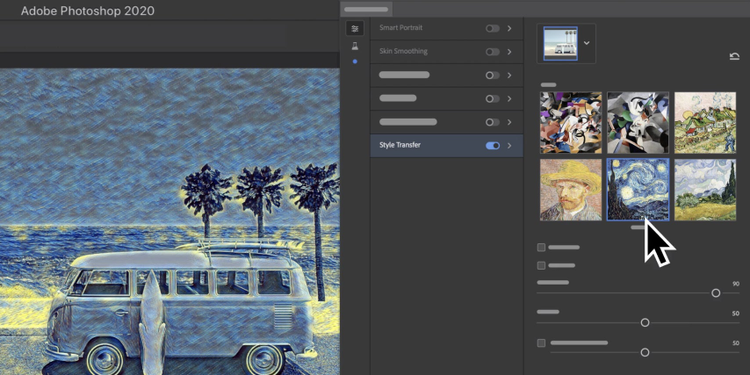

Style transfer

The Style Transfer filter applies a selected artistic style to your image. This concept isn’t new, but using AI to read and literally transfer the look of one image onto another is a massive time saver. Eventually, as the machine learning models evolve, the goal is for you to be able to choose any image — say, a Claude Monet painting — for Photoshop to read and apply to any other image you upload. For now, Photoshop includes a library of selected artistic styles that you can apply with one click to give your photos new character and versatility.

Neural filters in beta

Smart portrait

The Smart Portrait filter has generated excitement for its ability to simplify complex portrait editing workflows into a few simple steps. With it, you can adjust portraits for emotion, such as happiness, surprise, or anger, or change the age of a subject’s face. You can also adjust hair thickness, the tilt of the head, and the direction of light on the subject.

Makeup transfer

Similar to the Style Transfer filter, the Makeup Transfer filter allows you to take a makeup style from a subject in one open image and apply it to a person in another. It focuses on the eyes and mouth, expanding your options for creating a specific look that fits your project or campaign.

Depth-aware haze

We’ve all seen countless wedding photos taken in the mountains or on the beach with subjects in sharp focus against a hazy background. The old-fashioned way to manually create this kind of depth effect during editing was to paint noise or haze into the background. The new Depth-Aware Haze filter uses depth information to apply an environmental haze that gets more pronounced the further objects are from the camera. It also adjusts the temperature of the surroundings to make them warmer or cooler.

Colorize

The Colorize filter brings vintage photos back to life by adding color to black-and-white photos. The Photoshop team is also hard at work on several other neural filters that could be used in conjunction with the Colorize filter, including a Photo Restoration filter and a Dust and Scratch Removal filter. Combining filters that make sense under the umbrella of a single workflow is one more way Photoshop envisions streamlining tasks.

Super zoom

With the Super Zoom filter, you can quickly zoom in and crop an image, and Photoshop adds details to compensate for the loss of resolution. Like fictional FBI investigators in movies, who zoom in repeatedly on an image and enhance it each time, you can now zero in on part of a photo and blow it up to see more detail. This filter doesn’t actually increase the resolution of the image, but it removes distracting artifacts as you enlarge that section of the image.

JPEG artifacts removal

On a related note, the JPEG Artifacts Removal filter comes in handy when you’re in a pinch and have only a low-resolution image. If the image has been down sampled, this filter can remove artifacts resulting from JPEG compression, such as when images are saved at a web resolution. It’s one of the best-reviewed Photoshop filters based on hundreds of thousands of in-app surveys.

Looking ahead

The exciting thing about neural filters is that, because they rely on AI technology, their results are always improving. The more you use the filters and report your experiences, the faster the Photoshop team can retrain the machine-learning models and release new versions of the filters. You can also vote in Photoshop for new filters you’d like to see added in the future.

Read more and get started here.